Efficient and Robust Learning Architectures for Physical AI

Brainoid

A Problem in Training Physical AI

AI "world models" (systems that learn from experience to predict and act in physical environments) are central to physical AI: robotics, autonomous systems, and simulation of the physical world. Yet today's approaches demand enormous data and compute to train, and some notable experts believe current training architectures will not scale for physical AI as it did for token prediction in language models. The dominant strategy of simply scaling data and compute is hitting diminishing returns, with costs growing exponentially for incremental gains, and lack of robustness threatens the delivery of the promised goals of the physical AI industry.

Most notably, current systems still lag behind biological intelligence in sample efficiency by a factor of 10,000× to 1,000,000×, indicating a massive and transformative runway for future improvements! Existence of biology also suggests the bottlenecks are rooted in limitations of today's architectures, not fundamental. Brainoid Technologies is a bet on the upcoming fundamental improvements in AI learning architectures, beyond the inefficiencies of current architectures.

Our Solution

Brainoid Technologies is building a structured alternative to the current end-to-end generative approaches to physical AI, informed by how biological systems reason robustly and differently about the physical world. The product is a set of technological innovations within a standard AI development product used to train and deploy AI models: specifically a MLOps software layer that will make these tools practical and accessible to teams without massive R&D budgets.

Technical Approach: Instead of blackbox generative next token prediction of the dominant end-to-end AI architectures, the approach is driven by system architectures that incorporate two key ideas:

Compact Latent Representation: learning compact latent representations that capture only what matters for physical reasoning;

Acceleration Through Structure: exploiting geometric and algebraic structure within those representations to dramatically reduce computational cost.

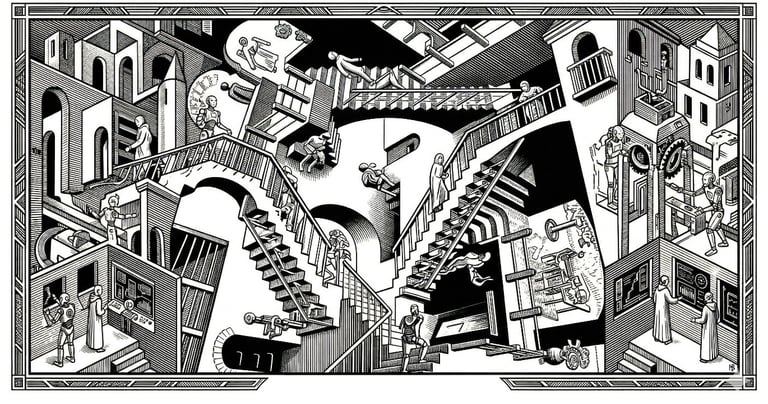

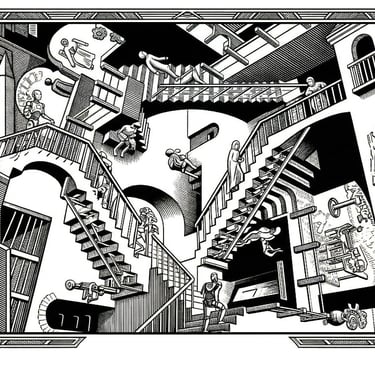

What does that mean? Consider imagining a banana and mentally rotating it; we don't simulate every physical detail, we reason over an abstract representation. This abstraction both compresses what needs to be stored (latent representation) and minimizes the computation required for physical reasoning (accelerated computation).

FAQ

Is this rooted in existing literature? This approach aligns with Yann LeCun's Joint Embedding Predictive Architecture (JEPA), which has recently reached meaningful levels of technical maturity yet remains significantly underutilized in industry due to an immature ecosystem. The acceleration-through-structure component builds directly on founder's prior work on multi-order-of-magnitude software acceleration through structure. The acceleration components have the potential to compound the benefits of latent representation architectures (like JEPA), making this a timely and high-leverage research direction, with a possibility to engineer significant competitive advantage.

Can the technology be packaged into different products? the MLOps layer can be productized for end-users in multiple ways: API, Cloud service, or software license. If end-user adoption takes off, lower layer architectural optimization (closer to hardware) could take the comparative advantages to the next level. This is where it is possible to create a durable ecosystem beyond the initial MLOps product.

How early is this? Pre-MVP, with a strong foundation after the core IP creation phase and an MVP design. We have notable end-user interest from European autonomous driving and Space related physics simulation, and we are in the process of forming the team to go from a small focused IP-building phase, to a scale-up company bringing the solutions to the physical AI space.

Supported by:

In collaboration with: